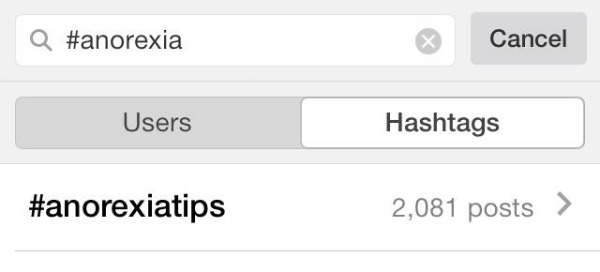

Today I’ve got to sidestep away from our usual waffling about fake tan and leggings and write about something that affected me quite a lot last night. I was having my usual browse through Instagram and came across a hashtag that caught my eye – #anorexia.

Out of intrigue I clicked on the link, thinking that surely there wouldn’t be that many posts associated with this mental illness? I was unfortunately really, really wrong with this assumption. What I found as I explored the topic deeper genuinely disturbed me, and eventually compelled me to write this post so that we’re all much more aware of what exactly is being portrayed on the photo sharing site that many of us use every day – and just how easily accessible this damaging content is for people of all ages.

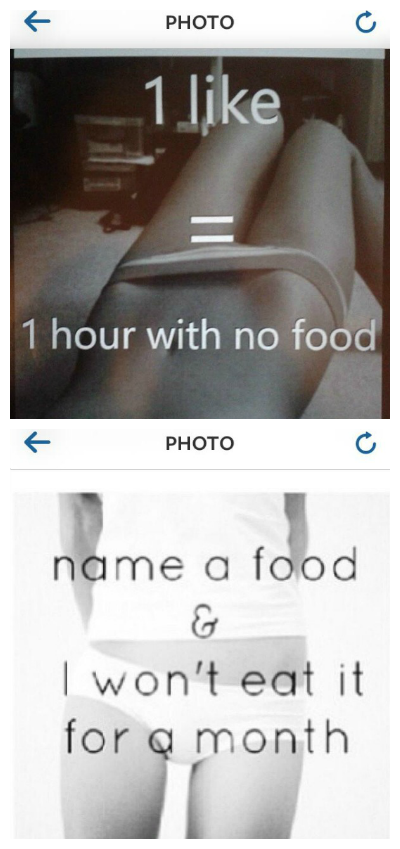

There are over one and a half million posts dedicated to anorexia on Instagram. What the vast majority of these photos represent is a massive online community of those who are either suffering with, or are very interested in, anorexia. This community of users are regularly sharing genuinely disturbing content including ‘inspirational’ photos of emaciated girls, encouraging quotes related to not eating and weight loss, and frankly horrific images such as these below:

Users are actually interacting with these photos, actively encouraging each other to give up certain food or go without eating at all. Whether or not the people behind these accounts are genuinely following through with the promises is unknown, but the photos reveal the extent to which this community of impressionable young girls is thriving on the site.

Users are actually interacting with these photos, actively encouraging each other to give up certain food or go without eating at all. Whether or not the people behind these accounts are genuinely following through with the promises is unknown, but the photos reveal the extent to which this community of impressionable young girls is thriving on the site.

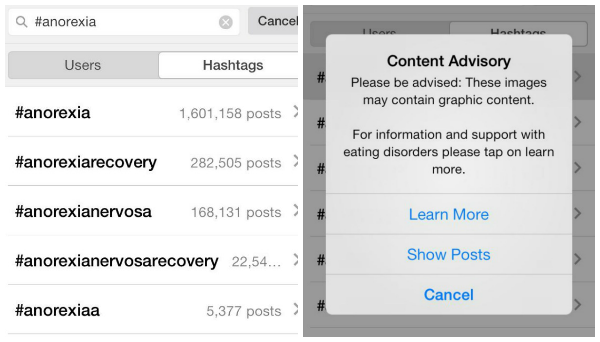

Arguably, you’re always going to have this sort of content somewhere on the internet. But what I found most interesting is the part that Instagram itself actually plays in this pro-ana community.

When you click on the link, the above warning pops up. To me, this is actually really disturbing – it shows that Instagram are monitoring the hashtag, have recognised its connection to those suffering from a mental illness, and yet continue to give them the option to view it anyway. Is this really their idea of safeguarding? You could argue that it’s not Instagram’s responsibility to control what is shared on the site; but then when you consider what happens when you try to search for sexually explicit content:

When you click on the link, the above warning pops up. To me, this is actually really disturbing – it shows that Instagram are monitoring the hashtag, have recognised its connection to those suffering from a mental illness, and yet continue to give them the option to view it anyway. Is this really their idea of safeguarding? You could argue that it’s not Instagram’s responsibility to control what is shared on the site; but then when you consider what happens when you try to search for sexually explicit content:

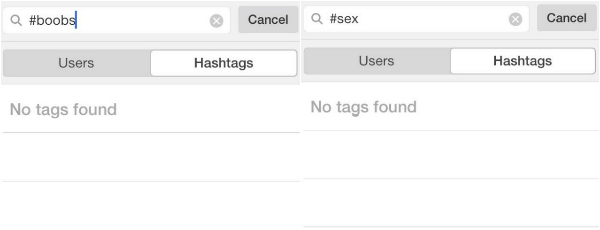

It seems that Instagram have taken the steps to block this content from users. Clearly naked bodies are judged to be far more damaging to young users than hashtags such as this:

It seems that Instagram have taken the steps to block this content from users. Clearly naked bodies are judged to be far more damaging to young users than hashtags such as this:

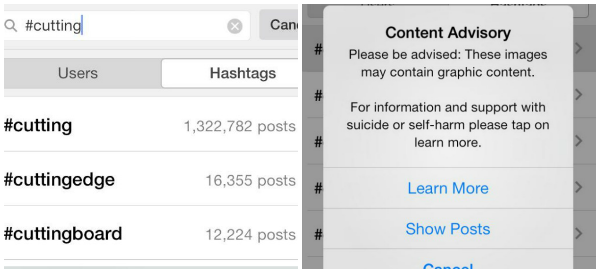

The more I looked into the pro-anorexia content, I also came across the disturbing crossover into the world of self-harm and teenage depression. ‘#Cutting’ brings up the same warning as the anorexia tag, this time seeking to advise users on suicide and self harm. Once again though, they’re welcome to click through the warning and access over a million images focused around self-loathing and physical harm.

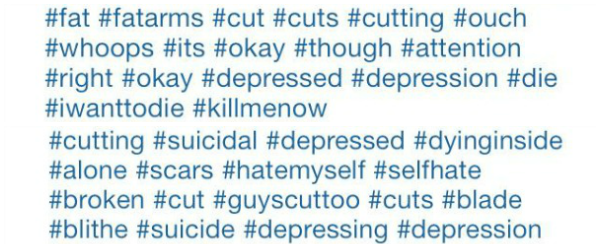

I’ve chosen not to screenshot any of the actual images themselves, but some of them are incredibly graphic shots of deep cuts and arms covered in blood. All bear captions claiming that the person behind the account has just made those cuts, and immediately uploaded the photos to Instagram. Here’s a snippet of just some of the hashtags used alongside these images, which again, Instagram are clearly making no effort to monitor or censor:

I’ve chosen not to screenshot any of the actual images themselves, but some of them are incredibly graphic shots of deep cuts and arms covered in blood. All bear captions claiming that the person behind the account has just made those cuts, and immediately uploaded the photos to Instagram. Here’s a snippet of just some of the hashtags used alongside these images, which again, Instagram are clearly making no effort to monitor or censor:

I won’t pretend that I understand what the people behind these accounts are thinking or feeling. Whether they’re all genuine sufferers, are crying out for attention or are just caught up in the subculture isn’t something you can assume from looking at any of the accounts. All you can really tell is how potentially damaging this all is. Most of the users are incredibly young, some describing themselves as being as young as 12 on their profiles. And if all you have to do to access images of sliced up wrists and starvation is to have access to a smartphone and click through one ‘warning’ from Instagram, then you can imagine just how many impressionable young people these warped images and ideas can be reaching. I have four nieces and to think that any of them could view content like this and be drawn into these communities is genuinely distressing.

I won’t pretend that I understand what the people behind these accounts are thinking or feeling. Whether they’re all genuine sufferers, are crying out for attention or are just caught up in the subculture isn’t something you can assume from looking at any of the accounts. All you can really tell is how potentially damaging this all is. Most of the users are incredibly young, some describing themselves as being as young as 12 on their profiles. And if all you have to do to access images of sliced up wrists and starvation is to have access to a smartphone and click through one ‘warning’ from Instagram, then you can imagine just how many impressionable young people these warped images and ideas can be reaching. I have four nieces and to think that any of them could view content like this and be drawn into these communities is genuinely distressing.

The argument will always be there that responsibility for this lies with parents, who should be monitoring what sites their kids are accessing. In theory this is obviously true; in reality kids and teenagers will always find a way around it. There’s also the argument that this conversation, if removed from Instagram, would continue on other platforms – whether that’s Tumblr (where I know this is also a big problem), forums or chatrooms. However, surely Instagram – as one of the most popular and most accessed applications in the smartphone industry – have a responsibility to remove this sort of content from their site? No, it won’t solve the wider problem at hand – but that’s no reason to permit these communities to exist.

What do you all think of this topic? Should Instagram be doing more to prevent this kind of content?

So glad you did this post, my cousin has got an unhealthy obsession with anorexia and i noticed a few weeks ago she started liking lots of posts with these hashtags! It’s disgusting how Instagram can allow this, it would stop so many people from delving into these topics if they had the same barriers on these tags as the sexual ones you mentioned!

Oh God, that’s awful 🙁 so true, it’s stuff that some people wouldn’t even know about or think about if it wasn’t so easy to stumble across..! xx

This is so disturbing! When you can clearly see that instagram stop anything of a sexual nature but this is fine! It should be the other way round, if anything! They should just have a warning to see boobs, not disturbing images of girls trying to help others damage themselves!

I remember there being a documentary (with Fern Cotton if I remember rightly…) a while back about pro anorexia websites and I just couldn’t believe it. I suppose it’s hard to monitor what is online but there’s so many campaigning for ‘no more page 3’ when this is going unnoticed!

(sorry, bit of a rant there wasn’t it… great post!)

Jenn | Photo-Jenn-ic

x

Completely agree with you, age restrictions on 18+ material would make sense – but to ban anything like that completely and let all this through just seems completely illogical.

I’ve seen a programme before about the websites too, I think thankfully a lot of them are now blocked by Google etc – but Instagram’s one of the biggest apps in the world so what’s the point if they can access it there?!

Glad other people are as horrified by this as I was! xx

I really wanted to tell you how beautifully written this post is. It’s really informative but not ‘in your face’ I’ve been an avid reading for a few months now and genuinely love every post, be it fashion, beauty or life related. You and Lauren put so much effort into every post and and anyone who reads them do appreciate. And your humour is effing awesome. Hope this doesn’t come across to mushy! X

Thank you so much Tara, this comment has meant a lot to both of us 🙂 So glad you enjoy the gunk that we put out there aha! Thanks for reading us xx

This is really shocking, I don’t understand why they would let such harmful content be easily accessed on their site? If they’re prepared to blog nudity then they should be prepared to block self harm and anorexia images. I’ve stumbled across pro anorexia sites and images before and they are really shocking to see. Great, well written post 🙂 xx

Kat from Blushing Rose

It really surprises me that they can acknowledge it enough to put warnings but then not have thought to just block it completely?!

I went through and reported all of the accounts that I saw posting the images, but they should surely be monitoring this themselves xx

WTF this is so bad it has actually made me feel sick. I didn’t even know this kind of thing existed on Instagram, it should be banned completely!

Great informative blog post x

Thank you Clo! It really is horrible to see, the actual photos were disgusting – and I’m 23, who knows how a 13 year old will feel seeing them xx

I agree I don’t think this should be readily available on Instagram. If they ban it it will move elsewhere but that’s no reason not to ban it from their site, especially given how acess able Instagram is with smartphones etc. i do agree that parents have to take responsibility too, is things like this not exactly why 12 year old shouldn’t have smart phones?

Lauren

livinginaboxx

Definitely, though I can see how parents can be pressured by their kids to allow them to have what everyone else has etc. Instagram must be aware that a lot of their users are young though, so should really be thinking about these problems. And then there’s the point that plenty of older teens/even adults could be affected by this sort of content too! xx

Brilliant post! As an ex-self harmer and someone who’s struggled with eating issues all my life, this kind of thing is rather alarming! It really is frightening that such content is SO easily available for anyone to read, particularly those who are already vulnerable or struggling with the aforementioned issues. I suspect that it’s almost like a competition for some – who can go the longest without eating, who can cut the deepest? Scary shit!!

Thank you so much for your comment, it’s great to get the perspective of someone who’s dealt with these issues first hand. I think the competition element is definitely there – in some cases in their bios they list how many suicide attempts they have made. It’s almost like the more ‘damaged’ you can portray yourself the better? xx

That was such a great post. It’s so disturbing that pictures on such serious issues are so easily available to people, especially impressionable young people. I definitely think instagram should be controlling this better – if they can regulate nudity then they can regulate this, it’s just as serious an issue xx

D Is For…

Thank you! I completely agree, it would be one thing if Instagram didn’t regulate anything and it was a completely free space – but it’s evidently not, and they have the power to block this content. So why aren’t they?! xx

This is so weird! I feel sorry for people who actually participate in these tags 🙁 Shame on instagram!! x

So do I, I think when it comes to deciding whether to ban the tags or not Instagram should be considering the fact that these are real people – most of them very young – being directly influenced. Thanks for your comment 🙂 xx

As much as this absolutely sickens me, I can also understand where Instagram could feel like there isn’t a clean win in this situation. If they leave it up at least they can monitor it and take down truly disturbing images (wether they are or not is another story) if they take it down it will continue on other sites, tumblr has horrid problems with it. I personally think that it should be taken down and the has tags should be banned as the would be if it were pictures promoting murder. These pictures are promoting killing someone, I feel the grey area comes along online because it is yourself and you are not harming others. I will be interested to see what action if any Instagram takes as people become more aware of the growing problem. Great post, thank you for helping to bring these issues to light.

Yeah I definitely see your point, and thought myself about whether if they were to ban these tags, would the people in the communities just find a way around it – start using other hashtags etc? But I agree that it’s no excuse for Instagram to not even try; at the moment by allowing the hashtags to exist it’s almost like they’re being condoned! Thanks for reading and sharing your thoughts 🙂 xx

Its so upsetting that people do these things, and its more upsetting that social media sites allow young people to encourage each other to carry on! Fingers crossed something will be done about this, if it saves just one life removing the hashtag and anything related then id say there’s a reason for instagram to remove it!

Thanks for sharing

Aimee X

Hi Claire,

I know this is an old post but I only just read it. I completely agree wth everything you have written here. And it was very well written too.

Thought you would like to know that this has now been removed by Instagram. I know there will still be ways for kids in particular to see this, but hopefully the removal of those hashtags will help- even if it just stops one person it’s worth it.

Think your blog is great 🙂

Sarah